NeuralByte's weekly AI rundown - 03th March

Challenging Nvidia’s AI Dominance, AI investments, Claude 3 dethroning ChatGTP-4

Greetings fellow AI enthusiasts!

I’m sorry for late arrival of this edition. I was on vacation with family. I made this little bigger and included news from monday. You will find here news about how companies trying to challenge Nvidia’s leadership position in AI field, One step image generator which drastically decrease generation time and energy consumption or how Claude surpassed ChatGTP-4 in chatbot arena.

Dear subscribers,

Thanks for reading my newsletter and supporting my work. I have more AI content to share with you soon. Everything is free for now, but if you like my work, please consider becoming a paid subscriber. This will help me create more and better content for you.

Now, let's dive into the AI rundown to keep you in the loop on the latest happenings:

🔥 Challenging Nvidia’s AI Dominance Through Software Innovation

📱 Microsoft’s Copilot to Run Locally

🧠 Stability AI’s Leadership Shakeup

📭 The Rise of Generative AI in Enterprise

🤖 MIT’s One-Step Image Generator

💻 OpenAI’s Sora Captivates Hollywood

💬 Apple to Unveil AI Strategy at Worldwide Developers Conference on June 10

🧠 Jamba: The First Production-Grade Mamba-Based Model

🤯 Microsoft and OpenAI’s Ambitious $100 Billion AI Supercomputer Project

😄 Grok 1.5: The Next Evolution of X’s Chatbot Technology

👾 Amazon and Anthropic’s Strategic AI Collaboration

💥 OpenAI Unveils Voice Engine

⬆️ Claude 3 Opus Surpasses GPT-4 in Chatbot Arena

And more!

Challenging Nvidia’s AI Dominance Through Software Innovation

In the realm of artificial intelligence, Nvidia has long been a titan, with its AI chips becoming essential for developers in the generative AI space. However, a new coalition of tech giants is emerging to challenge Nvidia’s stronghold by targeting the very foundation of its success: its software platform. This article delves into the strategic moves by companies like Qualcomm, Google, and Intel as they unite to create a more open ecosystem for AI development.

Nvidia’s $2.2 trillion market cap is a testament to its dominance in producing AI chips that power a wide range of applications, from startups to tech behemoths like Microsoft and Google. The company’s nearly two decades of computer code have made it a formidable force, with over 4 million developers relying on its CUDA software platform. Yet, this reliance is precisely what the new coalition aims to disrupt. By focusing on Nvidia’s software, they hope to offer developers an alternative path away from Nvidia’s ecosystem.

The UXL Foundation, spearheaded by Intel with its OneAPI technology, is at the forefront of this initiative. The foundation’s goal is to create a suite of software and tools that can support various AI accelerator chips, fostering an open-source project that allows computer code to run on any machine, regardless of the underlying chip and hardware. This move could democratize AI development, giving developers the freedom to choose their hardware without being locked into Nvidia’s platform.

The details:

Nvidia’s market cap of $2.2 trillion highlights its influence in the AI chip market.

Qualcomm, Google, and Intel are leading a coalition to challenge Nvidia’s dominance by targeting its software platform.

The UXL Foundation aims to create an open-source suite of software and tools compatible with multiple AI accelerator chips.

OneAPI technology, developed by Intel, is a key component of the UXL Foundation’s strategy to break Nvidia’s software grip.

The initiative seeks to promote productivity and choice in hardware for AI development, offering an alternative to Nvidia’s CUDA platform

Why it’s important:

The push to break Nvidia’s grip on AI is more than just a power struggle among tech giants; it represents a pivotal shift towards an open ecosystem that could significantly benefit the AI community. By providing developers with more choices and reducing dependency on a single company’s platform, innovation can flourish. This is particularly crucial as AI becomes increasingly integrated into various sectors, necessitating a diverse range of hardware and software solutions to meet different needs.

Moreover, the success of such an open-source initiative could accelerate the adoption of AI technologies, making them more accessible to a broader audience. It could also spur competition, leading to more advanced and cost-effective AI solutions. Ultimately, this movement towards an open AI ecosystem could pave the way for a future where AI is more inclusive, versatile, and powerful.

Microsoft’s Copilot to Run Locally

The tech world is abuzz with the latest developments in AI PCs. Intel’s AI Summit in Taipei has shed light on Microsoft’s plans for its Copilot AI service, which is set to transition from cloud-based operations to local execution on personal computers. This move is poised to redefine the AI PC landscape, with Microsoft and Intel co-developing a new standard that includes a Neural Processing Unit (NPU), CPU, GPU, Microsoft’s Copilot, and a dedicated Copilot key on the keyboard.

As the first wave of AI PCs hits the market, Intel reveals future requirements that will push the boundaries of AI processing power. The next-gen AI PCs will demand a staggering 40 TOPS in the NPU, a significant leap from the current offerings by Intel and AMD. This evolution promises to enhance local computation capabilities, offering benefits in latency, performance, and privacy.

The shift towards local processing of AI workloads marks a strategic move by Microsoft to optimize the user experience. By running Copilot on the NPU, Microsoft aims to minimize the impact on battery life, ensuring that the GPU and CPU remain available for other tasks. This focus on customer experience is central to Microsoft’s vision for the future of AI PCs.

The details:

Local Execution: Microsoft’s Copilot AI service will transition to running locally on PCs, offering improved latency, performance, and privacy.

40 TOPS Requirement: Next-gen AI PCs will require NPUs with 40 TOPS of performance, a significant increase from current models

Battery Life Optimization: Microsoft insists on running Copilot on the NPU to minimize the impact on battery life and free up the GPU and CPU.

Intel’s Roadmap: Intel plans to address every segment of the AI market with its next-gen processors, including the Lunar Lake processors with enhanced AI performance.

Developer Engagement: Intel’s AI PC Accelerator Program aims to support 300 new AI-enabled features, with many optimized specifically for Intel’s silicon.

Why it’s important:

The integration of AI into personal computing is a game-changer, offering unprecedented capabilities and efficiencies. Microsoft’s initiative to run Copilot locally on PCs signifies a major step towards more responsive and private AI interactions. The requirement for 40 TOPS of NPU performance sets a new standard in the industry, driving innovation and competition among chip manufacturers. This evolution not only benefits tech enthusiasts but also business owners who rely on cutting-edge technology to stay ahead. The AI PC revolution is here, and it’s reshaping the way we interact with technology in our daily lives.

Stability AI’s Leadership Shakeup

Emad Mostaque, the founder of Stability AI, has stepped down from his role as CEO. This decision comes after a tumultuous period marked by internal disputes and a series of high-profile departures within the company. Stability AI, known for its popular image generation tool Stable Diffusion, has been a significant player in the generative AI space, attracting considerable attention and investment.

The details:

Emad Mostaque resigns: The founder of Stability AI has resigned to focus on decentralized AI, following a wave of departures at the startup.

Interim leadership: Chief Operating Officer Shan Shan Wong and Chief Technology Officer Christian Laforte will serve as interim co-CEOs.

Investor and staff turmoil: The resignation follows investor mutiny and staff exodus that left the tech company in turmoil.

Legal challenges: Stability AI faces a copyright lawsuit from Getty Images Holdings Inc., adding to its financial and operational challenges.

Future of generative AI: The company’s struggles reflect the broader challenges faced by generative AI startups in a competitive and resource-intensive industry.

Why it’s important:

The resignation of Emad Mostaque signifies a pivotal moment for Stability AI and the generative AI industry at large. As the company grapples with leadership changes and legal battles, the future of generative AI remains uncertain. This technology, which has the potential to revolutionize content creation across various mediums, now faces a critical test of resilience and adaptability. The outcome of Stability AI’s current challenges will likely influence the direction of the entire field, underscoring the importance of stable and visionary leadership in the rapidly evolving landscape of artificial intelligence.

The Rise of Generative AI in Enterprise

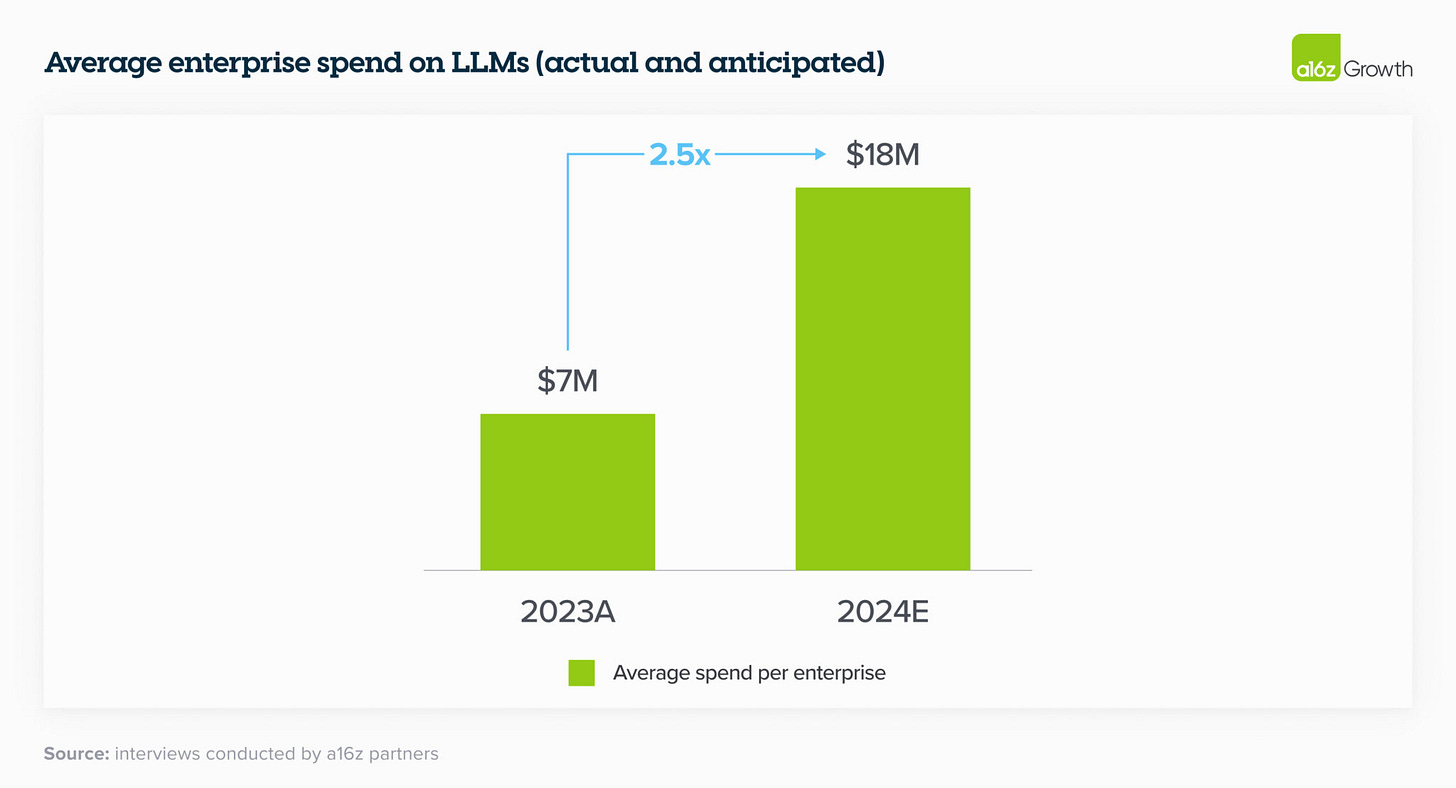

Generative AI has rapidly transformed the consumer landscape, achieving over a billion dollars in consumer spend in 2023. In 2024, the enterprise sector is poised to embrace genAI even more extensively. Last year’s consumer fascination with AI companions and creative models is giving way to a surge in enterprise applications, from a few initial use cases to a broader, production-ready deployment.

The details:

Enterprise Engagement: In 2023, consumer spend on Generative AI (genAI) reached over a billion dollars. In 2024, enterprises are expected to spend multiples of that amount, with budgets for genAI nearly tripling.

Budget Allocation: The average spend on foundation model APIs, self-hosting, and fine-tuning models was $7M across companies surveyed. Enterprises plan to increase their genAI spend by 2x to 5x in 2024.

Shift from Innovation to Standard Software: Previously, genAI spend came from one-time “innovation” budgets. In 2024, many leaders are shifting this spend to permanent software line items, with less than a quarter relying on innovation budgets.

ROI Measurement: Enterprises are measuring Return on Investment (ROI) by increased productivity, with plans to develop more tangible metrics like revenue generation, savings, efficiency, and accuracy gains.

Model Diversity: Enterprises are moving from using a single model to testing and using multiple models to tailor use cases based on performance, size, and cost, and to avoid vendor lock-in.

Open Source Preference: There’s a significant shift towards open source models, with 46% of survey respondents preferring open source going into 2024, aiming for a 50/50 split between open and closed source models.

Why it’s important:

The enterprise sector’s embrace of generative AI signifies a major shift in technology adoption and investment. With budgets increasing and a focus on tangible ROI, genAI is set to revolutionize productivity and efficiency in the business world. The move towards open-source models also indicates a growing preference for control and customization, which could redefine the landscape of AI solutions in the enterprise. This trend underscores the strategic importance of genAI as a tool for innovation and competitive advantage in the global market.

MIT’s One-Step Image Generator

MIT researchers have made a groundbreaking advancement in the realm of AI-generated art with their Distribution Matching Distillation (DMD) method. This innovative framework simplifies the complex, multi-step process of traditional diffusion models into a single step, dramatically accelerating the image generation process while maintaining high quality. The DMD method, which is 30 times faster than StableDiffusion v1.5, could potentially revolutionize content creation across various fields, including design, drug discovery, and 3D modeling. By leveraging a teacher-student model, the DMD approach ensures stable training and high-quality output, promising a future where high-quality real-time visual editing becomes a reality. This significant leap in AI technology was supported by various grants and will be presented at the Conference on Computer Vision and Pattern Recognition

OpenAI’s Sora Captivates Hollywood

In a bold move to integrate AI into the heart of the film industry, OpenAI has been courting major Hollywood studios with its cutting-edge video generation model, Sora. Paramount, Universal, and Warner Bros Discovery have witnessed firsthand the capabilities of Sora, which can craft detailed videos from simple text prompts. Despite concerns over AI’s impact on creative jobs, the technology has sparked discussions on cost-saving benefits and production efficiencies. With Sora’s current limitations, such as generating videos under one minute and occasional logical errors, OpenAI is yet to announce a release date, focusing instead on refining the model and addressing safety considerations before commercialization. As Hollywood navigates the implications of AI in filmmaking, Sora stands at the forefront of a potential paradigm shift in content creation.

Apple to Unveil AI Strategy at Worldwide Developers Conference on June 10

Apple is set to reveal its AI strategy at the Worldwide Developers Conference starting on June 10, with a focus on integrating AI into its next major software updates. The event, announced for March 26 to June 14, will feature free online access for developers and in-person opening day announcements at Apple’s Cupertino campus.

The iOS 18 upgrade is touted as the most significant revamp of iPhone software, emphasizing AI enhancements. While not launching its own AI chatbot, Apple is exploring partnerships with companies like Google and OpenAI for generative AI services.

Jamba: The First Production-Grade Mamba-Based Model

Jamba, the world’s first production-grade Mamba-based model, has been unveiled, marking a significant advancement in language model technology. By integrating Mamba Structured State Space (SSM) with traditional Transformer architecture, Jamba overcomes the limitations of pure SSM models. With a 256K context window, Jamba showcases impressive gains in throughput and efficiency, setting a new benchmark in the field.

The release of Jamba, under the Apache 2.0 license, invites the community to explore and optimize this innovative hybrid architecture. Accessible through the NVIDIA API catalog as an inference microservice, Jamba is poised to revolutionize enterprise application development with its cutting-edge capabilities.

Jamba’s debut signifies two major breakthroughs: the successful integration of Mamba with Transformer architecture and the elevation of the hybrid SSM-Transformer model to production-grade standards. This leap forward addresses the drawbacks of conventional Transformer models, offering a new horizon for language model development.

The details:

Hybrid Architecture: Jamba combines Transformer, Mamba, and mixture-of-experts (MoE) layers, optimizing memory, throughput, and performance.

Efficient Scaling: Jamba is the first to scale Mamba beyond 3B parameters, reaching production-grade scale with a hybrid structure.

Innovative Design: Features a blocks-and-layers approach, integrating attention and Mamba layers with MLP, achieving a balanced ratio of one Transformer layer per eight total layers.

MoE Utilization: Streamlines active parameters at inference, enhancing model capacity without increasing compute requirements.

Performance Excellence: Delivers 3x throughput on long contexts compared to Transformer-based models, fitting 140K context on a single GPU.

Why it’s important:

Jamba’s emergence is pivotal for the future of AI, offering a more efficient and cost-effective model than its predecessors. Its ability to handle long contexts with increased throughput paves the way for more accessible deployment and experimentation. As Jamba continues to evolve through community-driven enhancements, its potential to reshape enterprise AI solutions is immense. The release of Jamba not only showcases AI21’s commitment to innovation but also sets a new standard for language model development, promising a transformative impact on the industry.

Microsoft and OpenAI’s Ambitious $100 Billion AI Supercomputer Project

Microsoft and OpenAI are joining forces on a colossal data center project, featuring an AI supercomputer named “Stargate,” set to be operational by 2028. The project, estimated to cost up to $100 billion, is a response to the surging demand for generative AI technology and its data center requirements.

The details:

Stargate Supercomputer: Slated for launch in 2028, this AI supercomputer is part of a five-phase plan between Microsoft and OpenAI, with Stargate being the final phase.

Skyrocketing Costs: The project’s cost is projected to be 100 times more than some of the largest existing data centers, with expenses potentially exceeding $115 billion.

AI Chip Demand: A significant portion of the cost involves acquiring AI chips, which are sold at high prices, such as Nvidia’s “Blackwell” B200 chip, priced between $30,000 and $40,000.

Infrastructure Innovations: Microsoft aims to push the frontier of AI capability with next-generation infrastructure designed to work with chips from various suppliers.

Phased Development: Currently in the third phase, Microsoft and OpenAI are working towards the smaller, fourth-phase supercomputer, expected around 2026, before Stargate.

Why it’s important:

The partnership between Microsoft and OpenAI signifies a major leap in AI infrastructure development. The Stargate supercomputer represents the pinnacle of this collaboration, promising to handle advanced tasks beyond the capabilities of traditional data centers. This project underscores the critical role of AI in shaping the future of technology and its potential to revolutionize industries by offering unprecedented computational power and intelligence. For business owners and AI enthusiasts, the realization of such a project means access to more sophisticated AI tools and services, driving innovation and competitiveness in the market.

Grok 1.5: The Next Evolution of X’s Chatbot Technology

X is set to enhance the AI landscape with the upcoming release of Grok 1.5, an upgraded version of its widely acclaimed chatbot. This iteration promises to refine user interactions through advanced natural language processing, aiming to bridge the gap between human and machine communication. With a focus on nuanced dialogue and contextual understanding, Grok 1.5 is poised to offer a more intuitive and seamless experience, catering to the intricate needs of AI enthusiasts and business owners alike. As the anticipation builds, the tech community eagerly awaits to witness the potential of this sophisticated AI model.

Amazon and Anthropic’s Strategic AI Collaboration

Amazon’s partnership with Anthropic is setting a new precedent in the generative AI landscape. By choosing Amazon Web Services (AWS) as its primary cloud provider, Anthropic is not only committing to safety research and the development of future foundation models but also ensuring AWS customers globally can access these advancements. The collaboration has already yielded the Claude 3 family of models, which surpasses others in reasoning, math, and coding benchmarks. This synergy between Amazon’s robust infrastructure and Anthropic’s AI expertise is poised to revolutionize industries, from sports to life sciences, by fostering secure, innovative AI applications. Moreover, the union is strengthened by a substantial investment, cementing a shared vision for the transformative potential of generative AI.

OpenAI Unveils Voice Engine

OpenAI has introduced a groundbreaking text-to-voice generation platform named Voice Engine, capable of creating a synthetic voice from just a 15-second audio sample. This innovative AI model, which has been in development since late 2022, is designed to power the Read Aloud feature in ChatGPT2. It can read out text prompts in the speaker’s language or translate them into other languages, showcasing its versatility and potential for various applications.

The technology is currently being tested by a select group of companies, including Age of Learning and HeyGen, to generate pre-scripted voice-over content and provide real-time, personalized responses. OpenAI’s careful approach to deployment, focusing on small-scale implementations, reflects its commitment to developing responsible AI technologies.

Voice Engine’s capabilities extend beyond simple text-to-speech functions. It has already been integrated into preset voices for the text-to-speech API and ChatGPT’s Read Aloud feature. The model’s training involved a mix of licensed and publicly available data, ensuring a robust and versatile performance across different use cases.

The details:

Voice Engine: A platform that synthesizes voices from a short audio clip.

Multilingual Capabilities: It can read prompts in the original language or translate them.

Selective Deployment: Limited access to carefully chosen companies for testing.

Ethical Considerations: OpenAI requires partners to follow strict usage policies.

Innovative Applications: Used for educational content and real-time interactions.

Why it’s important:

Voice Engine represents a significant leap in AI voice technology, offering a glimpse into a future where synthetic voices are indistinguishable from human ones. Its ability to generate voices from minimal samples could revolutionize industries like entertainment, education, and customer service. However, the ethical implications of such technology are profound, necessitating careful consideration and regulation. OpenAI’s cautious approach and stringent policies underscore the importance of responsible AI development, ensuring that innovations like Voice Engine enhance our lives without compromising our values or security.

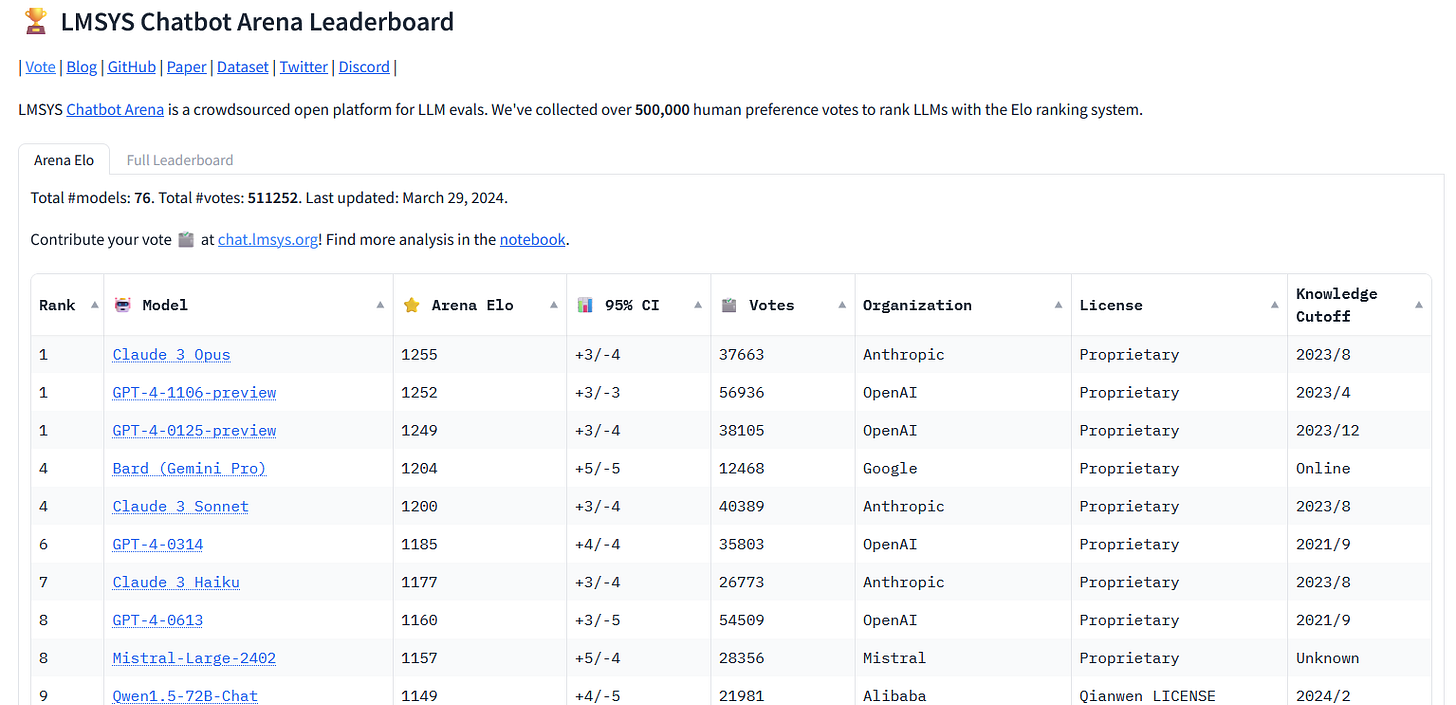

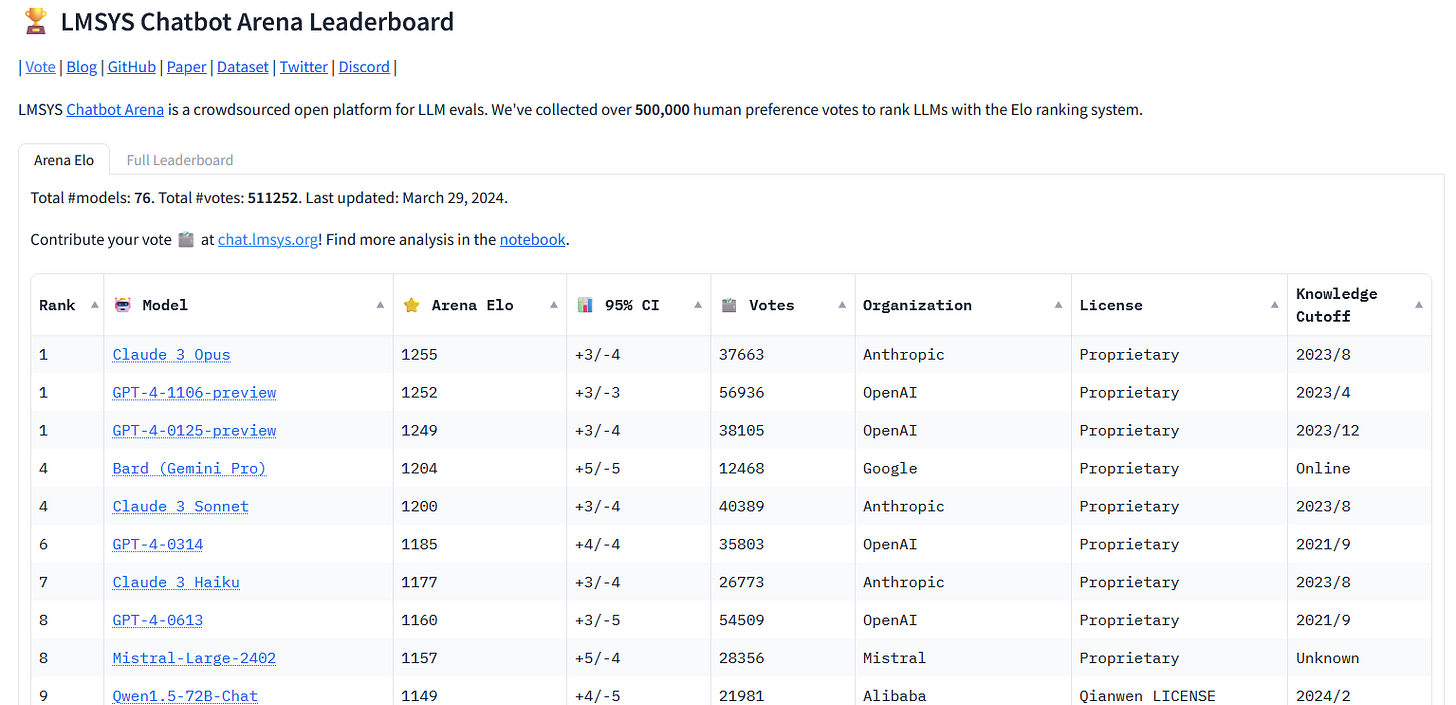

Claude 3 Opus Surpasses GPT-4 in Chatbot Arena

In a significant development, Anthropic’s Claude 3 Opus has outperformed OpenAI’s GPT-4 on the Chatbot Arena leaderboard, marking a notable shift in the AI language model landscape. The leaderboard, managed by LMSYS ORG and involving subjective comparisons by users, now showcases Claude 3 Opus at the top, indicating a diversification of leading vendors in the AI space. This milestone reflects the competitive nature of AI development, with OpenAI expected to release a new model later this year, intensifying the rivalry among AI assistants.

Be better with AI

In this section, we will provide you with comprehensive tutorials, practical tips, ingenious tricks, and insightful strategies for effectively employing a diverse range of AI tools.

Generative AI for Beginners

Microsoft releases 18 courses for learning generative AI They are available free of charge and were just updated last week.

Course includes:

00. Course Setup: Learn how to set up your development environment.

01. Introduction to Generative AI and LLMs: Understand what Generative AI is and how Large Language Models (LLMs) work.

02. Exploring and Comparing Different LLMs: Learn how to select the right model for your use case.

03. Using Generative AI Responsibly: Learn how to build Generative AI applications responsibly.

04. Understanding Prompt Engineering Fundamentals: Hands-on prompt engineering best practices.

05. Creating Advanced Prompts: Learn how to apply prompt engineering techniques that improve the outcome of your prompts.

06. Building Text Generation Applications: Build a text generation app using Azure OpenAI.

07. Building Chat Applications: Techniques for efficiently building and integrating chat applications.

08. Building Search Apps Vector Databases: Build a search application that uses embeddings to search for data.

09. Building Image Generation Applications: Build an image generation application.

10. Building Low Code AI Applications: Build a generative AI application using low code tools.

11. Integrating External Applications with Function Calling: What is function calling and its use cases for applications.

12. Designing UX for AI Applications: Apply UX design principles when developing generative AI applications.

13. Securing Your Generative AI Applications: Learn the threats and risks to AI systems and methods to secure these systems.

14. The Generative AI Application Lifecycle: The tools and metrics to manage the LLM lifecycle and LLMOps.

15. Retrieval Augmented Generation (RAG) and Vector Databases: Build an application using a RAG framework to retrieve embeddings from vector databases.

16. Open Source Models and Hugging Face: Build an application using open source models available on Hugging Face.

17. AI Agents: Build an application using an AI agent framework.

18. Fine-Tuning LLMs: The what, why, and how of fine-tuning LLMs.

We hope you enjoy this newsletter!

Please feel free to share it with your friends and colleagues and follow me on socials.