Greetings fellow AI enthusiasts!

I regret to inform you that I haven’t launched a new edition of our newsletter in the past two weeks, I was on vacation. During this time, significant developments have occurred across various domains. In our thisedition, we’ll focus on the most impactful news and insights. Thank you for your patience, and stay tuned for an informative and engaging read!

Dear subscribers,

Thanks for reading my newsletter and supporting my work. I have more AI content to share with you soon. Everything is free for now, but if you like my work, please consider becoming a paid subscriber. This will help me create more and better content for you.

Now, let's dive into the AI rundown to keep you in the loop on the latest happenings:

📅 Meta Llama 3: The Next Leap in Language Models

🦙 Microsoft's Phi-3 Mini

💻 Boston Dynamics Unveils Electric Atlas Robot

🆕 Adobe’s Generative AI Vision for Video Editing

🕵️ NVIDIA’s New RTX GPUs

👾 Adobe Firefly Image 3 Model: Revolutionizing Creative Workflows

🤖 Mixtral 8x22B: A Leap in Open-Source AI

🧠 Intel’s Neuromorphic Computing Breakthrough

🆕 Using VASA to Revolutionize Virtual Interactions

🍎 Instagram’s A.I. Chatbot for Influencers

🧑💻 Reka: The New Frontier in Multimodal Language Models

🌉 Adobe’s Ethical AI Dilemma

🚧 Apple’s AI Advancements in iOS 18

🎶 AI-Driven Music Playlists

And more!

Meta Llama 3: The Next Leap in Language Model

s

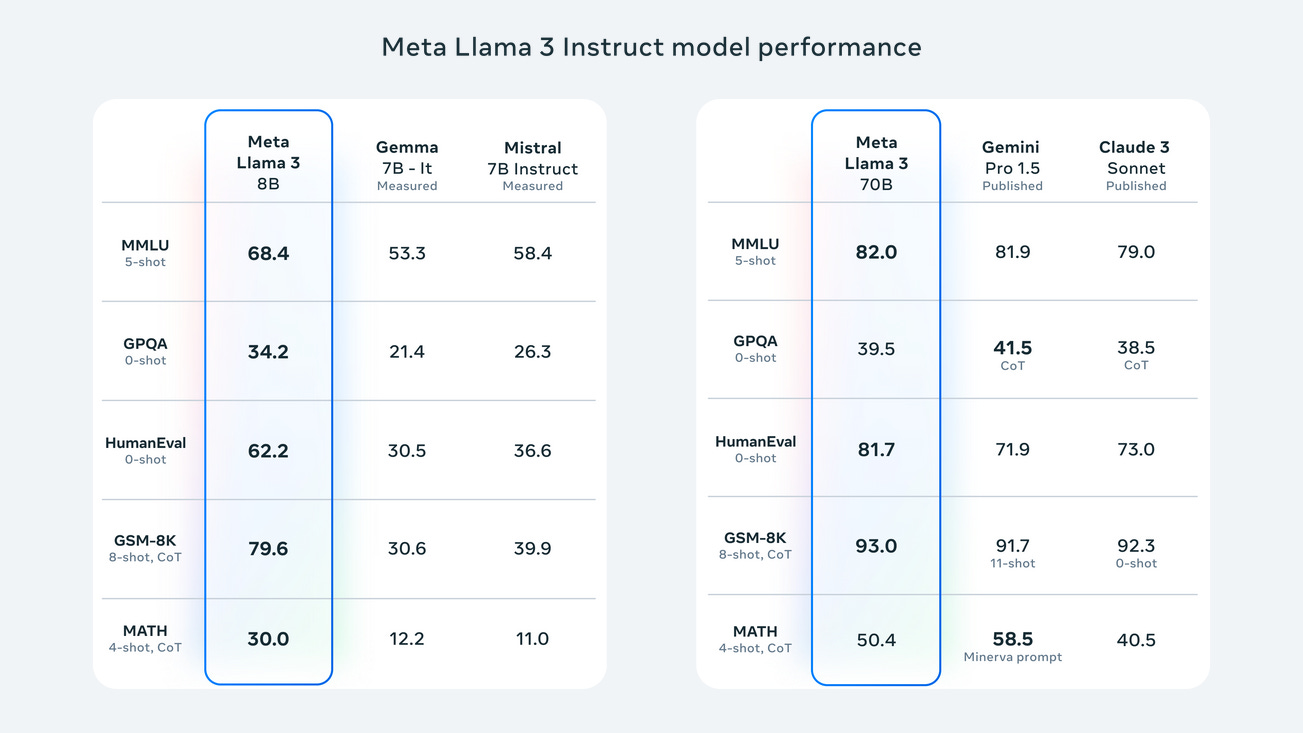

Meta has unveiled Llama 3, the latest iteration in their series of language models, boasting significant advancements in AI capabilities. This new generation includes models with 8B and 70B parameters, setting a new benchmark in the industry.

The details:

Broad Use and Open Source: Llama 3 models are designed for a wide range of applications and are open source, encouraging community-driven innovation.

State-of-the-Art Performance: These models demonstrate superior performance on various benchmarks and feature enhanced reasoning and coding abilities.

Community-Oriented Development: Meta emphasizes an open approach, releasing models early to allow community access during development.

Advanced Training and Architecture: Llama 3 benefits from improvements in pretraining, post-training, and a tokenizer that efficiently encodes language, leading to better model performance.

Future Vision: Meta aims to make Llama 3 multilingual and multimodal, with longer context windows and continuous performance enhancements.

Why it’s important:

Llama 3 represents a significant stride in language model development, offering unprecedented capabilities that were previously confined to proprietary models. Its open-source nature fosters a collaborative environment where developers and businesses can harness its potential for various AI-driven applications. The model’s improved reason

/koprivnicka-tatra-ziskala-dotaci-na-vyvoj-nakladniho-baterioveho-elektromobilu/ing and coding skills are particularly beneficial for tasks requiring complex problem-solving and automation. By democratizing access to such powerful tools, Meta is catalyzing a new wave of AI innovation, enabling both enthusiasts and business owners to explore and create cutting-edge solutions. Llama 3’s advancements are not just technical milestones; they are stepping stones towards a more integrated and intelligent digital future.

Microsoft's Phi-3 Mini

Microsoft has made a major contribution to the field of artificial intelligence with the release of Phi-3 Mini, a little but potent AI model. Because of its ability to complete intricate tasks with a smaller footprint, this new model is available on multiple platforms, such as Hugging Face, Azure, and Ollama.

The details:

Phi-3 Mini’s Size: With 3.8 billion parameters, Phi-3 Mini is trained on a smaller data set, offering a balance between capability and efficiency.

Training Approach: Inspired by children’s learning from bedtime stories, developers used a unique ‘curriculum’ to train Phi-3 Mini with simpler words and structures.

Performance: Despite its size, Phi-3 Mini rivals larger models like GPT-3.5 in capabilities, thanks to its advanced training methods.

Future Models: Microsoft plans to expand the Phi-3 series with Small (7B parameters) and Medium (14B parameters) models, catering to different needs.

Affordability: Smaller models like Phi-3 Mini are not only cheaper to run but also perform better on personal devices, making them a cost-effective solution for businesses.

Why it’s important:

The development of smaller AI models like Phi-3 Mini is crucial for the tech industry. They offer a more affordable and efficient way to integrate AI into various applications, especially for businesses with smaller data sets. Phi-3 Mini’s ability to perform at the level of much larger models opens up new possibilities for AI usage in everyday technology, making it a game-changer for both AI enthusiasts and business owners. The focus on creating models that are not only powerful but also accessible reflects Microsoft’s commitment to advancing AI technology in a practical and inclusive manner.

Semiconductor Resurgence in the U.S.

The semiconductor industry, once a cornerstone of American innovation, experienced a decline, with domestic production plummeting from 40% to just over 10% of the world’s capacity. Recognizing the economic and national security risks, the CHIPS and Science Act has been a game-changer, revitalizing semiconductor manufacturing and employment. A landmark preliminary agreement with Taiwan Semiconductor Manufacturing Company (TSMC) promises the construction of cutting-edge facilities in the United States, including a third chip factory in Phoenix, Arizona. This $65 billion investment is set to create over 25,000 direct jobs and numerous indirect ones, with an aim to elevate U.S. production to 20% of the global leading-edge semiconductors by 2030. Moreover, $50 million from the CHIPS fund is allocated for local workforce training, ensuring that high-quality jobs remain accessible without the need to relocate.

Boston Dynamics Unveils Electric Atlas Robot

Boston Dynamics has announced the retirement of its hydraulic Atlas robot and introduced a new, fully electric version designed for real-world applications. The electric Atlas is part of Boston Dynamics’ commitment to solving industry challenges with advanced mobile robots like Spot, Stretch, and now Atlas.

The details:

Hyundai Partnership: The journey begins with Hyundai, which will serve as a testing ground for Atlas in automotive manufacturing.

Electric Atlas Capabilities: The new Atlas is stronger and more agile, with a broader range of motion and potential for diverse manipulation needs.

Digital Transformation: Atlas is part of a larger digital ecosystem, requiring infrastructure, connectivity, and data for successful deployment.

Software Innovations: Boston Dynamics has developed Orbit™ software for managing robot fleets and integrating AI tools for efficient real-world operation.

Humanoid Advantages: The humanoid design of Atlas allows it to navigate environments built for humans, moving beyond human capabilities to perform tasks.

Why it’s important:

The electric Atlas represents a significant step in robotics, capable of performing complex tasks in environments designed for humans. Its integration into digital ecosystems and the use of AI tools enable efficient and adaptable operations. This innovation not only advances the state of robotics but also promises to transform industries by taking on dull, dirty, and dangerous tasks, paving the way for a future where robots and humans collaborate more closely.

Adobe’s Generative AI Vision for Video Editing

The video industry has undergone a transformative change with the advent of AI tools, particularly in Adobe Premiere Pro. AI-powered features have revolutionized the way professionals approach video editing, offering unprecedented levels of efficiency and creativity. The integration of AI in Adobe’s suite of video tools has not only streamlined tedious workflows but also opened up new possibilities for storytelling. With AI, editors can now tackle complex tasks with ease, such as extending shots, removing objects, or generating new content, all within the familiar interface of Premiere Pro. This evolution marks a significant milestone in the industry, as AI becomes an essential partner in the creative process, enabling creators to push the boundaries of their imagination and bring their visions to life with greater precision and artistry.

The details:

Generative AI Tools: New tools in Premiere Pro will allow editors to extend shots, add or remove objects, and generate b-roll using simple text prompts.

Firefly Video Model: Adobe is developing the Firefly Video Model to join its suite of AI models, promising professional-level results in video editing.

Third-Party Integrations: Adobe is exploring partnerships with third-party AI tools like OpenAI’s Sora, RunwayML, and Pika to expand Premiere Pro’s capabilities.

Smart Masking and Tracking: AI-powered masking and tracking tools will enable editors to manipulate moving objects in videos with ease.

Content Credentials: Adobe commits to transparency with Content Credentials, informing consumers about the AI models used in media creation.

Why it’s important:

Generative AI is set to revolutionize video editing by automating tedious tasks and enabling new creative possibilities. Adobe’s strategy to integrate AI across Creative Cloud will empower editors to achieve more with less effort, fostering innovation and efficiency in the video production industry. The commitment to transparency ensures trust and ethical use of AI in media creation.

NVIDIA’s New RTX GPUs

Ray Tracing and AI for Experts With the RTX A400 and A1000 GPUs, which are based on the Ampere architecture, NVIDIA hopes to enable AI tools and real-time ray tracing on any workstation. These GPUs are made to satisfy the growing need for sophisticated computational power in a range of creative and professional applications.

Increased Efficiency and Output With 24 Tensor Cores, the RTX A400 GPU outperforms CPU-based solutions in accelerated ray tracing and artificial intelligence. It makes it possible for cutting-edge programs to operate directly on PCs, such as copilots and intelligent chatbots. With 72 Tensor Cores, the A1000 GPU can perform generative AI workloads over three times quicker and handle graphics and rendering jobs up to three times faster.

The details:

AI Integration: By integrating AI into productivity and design apps, GPUs are raising the bar for professional computing.

Advanced RTX Offerings: By extending access to AI and ray-tracing technologies, the RTX A400 and A1000 GPUs give professionals revolutionary tools.

Real-Time Ray Tracing: This technology allows artists to push the envelope of realism and creativity by producing vivid, physically accurate 3D outputs.

Energy-Shrewd Design: These GPUs are a great choice for small workstations because of their attractive single-slot architecture and low 50W power consumption.

Adaptable Uses: These GPUs help a variety of professionals improve their workflows and attain higher levels of realism in their job, from industrial planning to healthcare.

Why it’s important:

With the release of the NVIDIA RTX A400 and A1000 GPUs, professional computing has advanced significantly. These GPUs will increase productivity and open up new creative possibilities by providing users with cutting-edge AI, graphics, and compute capabilities. They meet the increasing demand for additional processing capacity and have the potential to revolutionize everyday work processes by improving accessibility and efficiency for professionals in a variety of industries through the use of AI-enhanced jobs and ray-traced renderings, among other advanced procedures.

Adobe Firefly Image 3 Model: Revolutionizing Creative Workflows

Adobe’s latest innovation, the Firefly Image 3 Model, is a game-changer for creative professionals and businesses alike. With its enhanced photorealistic quality and styling capabilities, it offers unprecedented levels of creative expression and control.

The details:

Photorealistic Quality: The new model boasts remarkable improvements in image detail and accuracy, providing users with stunningly realistic results.

Styling Capabilities: Users can now explore a greater variety of styles, allowing for more personalized and unique creations.

Generative Expand Feature: This new feature in the Generative Fill module enables the alteration of an image’s aspect ratio or size, catering to diverse content needs.

Integration into Adobe Workflows: Firefly is seamlessly integrated into popular Adobe applications, transforming image editing and design processes.

Responsible AI Development: Adobe emphasizes the responsible use of AI, with initiatives like the Content Authenticity Initiative ensuring transparency in digital content creation.

Why it’s important: The Adobe Firefly Image 3 Model represents a significant leap forward in generative AI technology. It not only enhances the creative capabilities of individuals and businesses but also addresses the ethical considerations of AI usage. By attaching Content Credentials to AI-generated assets, Adobe fosters a transparent and trustworthy digital ecosystem, which is crucial as AI becomes increasingly integrated into our daily lives and commercial activities. This commitment to responsible AI development sets a new standard for the industry and underscores the importance of ethical considerations in technological advancements.

Mixtral 8x22B: A Leap in Open-Source AI

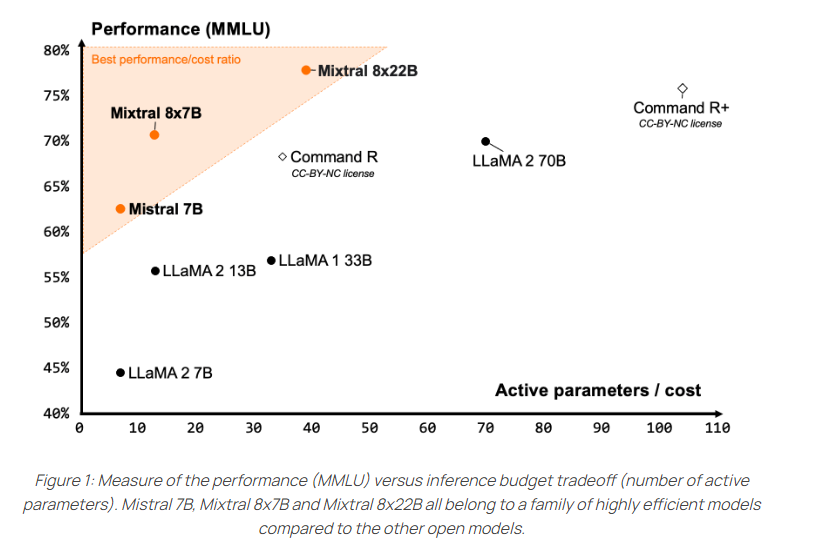

Mistral AI, a French startup, has introduced a groundbreaking open-source language model, Mixtral 8x22B. This model is not only efficient but also boasts the highest performance among its open-source counterparts.

The details:

Sparse Model: Mixtral 8x22B utilizes a sparse mixture-of-experts approach, actively using 39 billion of its 141 billion parameters.

Multilingual and Versatile: It supports multiple languages and excels in math and programming tasks.

Accessible and Permissive: Released under the Apache 2.0 license, it allows unrestricted use, fostering innovation and development.

Benchmark Excellence: The model outperforms others in comprehension, logic, and knowledge tests, setting new standards for open-source AI.

Platform Availability: Available on Hugging Face for community fine-tuning and testing on Mistral’s “la Plateforme”.

Why it’s important:

Mixtral 8x22B represents a significant stride in the democratization of AI technology. Its multilingual capabilities and strong performance in various benchmarks make it a valuable asset for both AI enthusiasts and business owners. By offering an open-source model with such high efficiency and performance, Mistral AI is paving the way for more inclusive and widespread AI development and application. This model’s release could potentially accelerate innovation and provide a robust tool for those looking to integrate advanced AI into their operations or research.

Intel’s Neuromorphic Computing Breakthrough

Intel has unveiled the world’s largest neuromorphic computer, designed to emulate the human brain’s functionality. This innovation aims to run more complex AI models than current computers, although it faces engineering challenges before surpassing existing technology.

The details:

Neuromorphic Architecture: Intel’s Hala Point neuromorphic computer integrates 1.15 billion artificial neurons across 1152 Loihi 2 Chips, performing 380 trillion synaptic operations per second.

Energy Efficiency: Hala Point claims to use 100 times less energy than traditional computers for optimization problems, potentially revolutionizing energy consumption in computing.

Software Bottleneck: The development of software to translate real-world problems for neuromorphic computing is still nascent, posing a significant challenge to its adoption.

Continuous Learning AI: Intel proposes that neuromorphic computers could enable AI models to learn continuously, a stark contrast to current models that require retraining for new tasks.

AGI Potential: Experts believe neuromorphic computing could be a step towards achieving artificial general intelligence (AGI), surpassing the capabilities of large language models like ChatGPT.

Why it’s important:

Neuromorphic computing represents a paradigm shift in artificial intelligence, offering a more brain-like approach to processing information. This could lead to AI models that learn and adapt over time, much like humans do, without the need for constant retraining. Moreover, the energy efficiency of such systems could significantly reduce the environmental impact of computing, making it a critical development for both AI enthusiasts and business owners looking to invest in sustainable and advanced technologies. Intel’s Hala Point is a pioneering step in this direction, although the full potential of neuromorphic computing will only be realized once the software and tools mature enough to harness its unique capabilities.

Using VASA to Revolutionize Virtual Interactions

Using VASA to Revolutionize Virtual Interactions

Our interactions with virtual characters are being revolutionized by Microsoft Research Asia's state-of-the-art platform, VASA. VASA produces incredibly lifelike talking face movies in real time, complete with accurate lip-audio synchronization and organic head motions, by fusing spoken sounds with a single static image.

The details:

Hyper-Realistic Avatars: VASA generates lifelike avatars that exhibit precise lip-audio sync and naturalistic facial expressions.

Innovative Technology: The framework operates in a face latent space, allowing for holistic facial dynamics and head movement generation.

High Performance: VASA supports online generation of 512x512 videos at up to 40 FPS with minimal latency, using a single NVIDIA RTX 4090 GPU.

Versatile Applications: The method can handle various inputs, including artistic photos, singing audios, and non-English speech, not present in the training set.

Responsible AI: The research team emphasizes the positive potential of VASA while acknowledging the possibility of misuse, ensuring responsible development.

Why it’s important:

VASA’s technology is a game-changer for creating virtual AI avatars with visual affective skills5. It creates new opportunities to improve accessibility, promote educational equity, and offer companionship or therapeutic support6. Real-time, lifelike interactions with avatars are a crucial development in the AI and IT industries, with profound implications for the future of communication and entertainment.

Instagram’s A.I. Chatbot for Influencers

Instagram is exploring the frontiers of artificial intelligence with its new “Creator A.I.” program, designed to empower influencers by automating interactions with their followers. This innovative chatbot mimics influencers’ unique communication styles, drawing from their past content to craft personalized responses. While still in the testing phase, the program promises to enhance fan engagement without the exhaustive effort of manual replies. However, it raises questions about authenticity in digital interactions, as influencers and fans alike ponder the implications of conversing with an A.I. proxy. Meta’s broader A.I. ambitions reflect a commitment to integrating this technology across various platforms, signaling a future where A.I. assistants are integral to our daily digital experiences.

Reka: The New Frontier in Multimodal Language Models

Summarized Article In the ever-evolving AI landscape, Multimodal Language Models (MLMs) are pivotal, linking text, images, videos, and audio. Reka, developed by Reka AI, is a cutting-edge model that integrates these diverse data forms seamlessly. As a series of MLMs, Reka tackles industry challenges, pushing AI boundaries with its variants: Reka Core, Flash, and Edge, each with unique capabilities.

The Details:

Reka Core: The flagship model, offering unparalleled multimodal understanding and processing.

Modular Architecture: Employs a ‘Noam’ architecture with advanced elements for efficient processing.

Extended Context Length: Supports up to 128K context length, ideal for complex tasks.

Performance Excellence: Outshines competitors in multimodal and language tasks evaluations.

Flexible Deployment: Accessible via API, on-premise, or on-device, catering to various industries.

Why It’s Important:

Reka represents a significant leap in MLMs, crucial for AI’s future. Its ability to comprehend and reason across multiple data forms makes it an indispensable tool for sectors like e-commerce, healthcare, and robotics. By advancing multimodal understanding, Reka paves the way for more intuitive and natural human-AI interactions, enhancing applications and services across the board.

Strategic AI Expansion in Emerging Markets

Microsoft has announced a significant investment in G42, an AI leader in the UAE, to co-create and deploy advanced AI solutions on Microsoft Azure across various industries in the Middle East, Central Asia, and Africa. This partnership, marked by a $1.5 billion investment for a minority stake and a board position for Microsoft’s Brad Smith, aims to leverage Microsoft Cloud’s capabilities to advance G42’s AI strategy, including generative AI and next-gen infrastructure. The collaboration is underpinned by a commitment to security, compliance, and responsible AI development, with oversight from a detailed Intergovernmental Assurance Agreement. G42’s trust in Microsoft’s cloud platform is foundational to the partnership, with plans to migrate its technology infrastructure to Azure, enhancing service delivery and supporting AI product development. The partnership also includes making G42’s Arabic Large Language Model available on Azure, aiding digital transformation in FAB, and advancing precision medicine through collaborations with global health institutions.

Adobe’s Ethical AI Dilemma

Adobe’s Firefly AI, marketed as a “commercially safe” tool, was trained primarily on Adobe Stock images. However, it has come to light that Firefly’s training also included AI-generated content from competitors like Midjourney. This revelation raises questions about the true source of Firefly’s training data, as Adobe Stock users may have uploaded Midjourney-generated images, inadvertently incorporating them into Firefly’s learning process. Adobe’s public stance on Firefly’s safety due to its training data now faces scrutiny.

AI-Driven Music Playlists

AI technology is enabling music streaming services to advance and provide users with options like creating custom playlists. Amazon Music has just released a beta version of Maestro, an AI function, for customers in the United States. Previously, Spotify had unveiled an AI playlist tool for Premium members in Australia and the United Kingdom1. With just a few text prompts, Maestro creates playlists that offer Amazon Music Unlimited users complete access and previews for additional tiers2. These services employ AI to improve user experience, but they also take care of possible problems by filtering offending information and improving the system in response to user input. This invention promises a more personalized and interactive music-listening experience, representing a major advancement in the incorporation of AI into popular entertainment.

Apple’s AI Advancements in iOS 18

Apple is set to enhance user privacy with its upcoming iOS 18 by introducing AI features that process data directly on the iPhone, bypassing cloud services. The anticipated update will include on-device AI capabilities, starting with basic text analysis and response generation functions available offline. Apple’s internal large language model, known as “Ajax,” aims to provide a competitive edge by eliminating reliance on cloud-based processing, addressing concerns over AI hallucinations—where AI tools confidently present fabricated information. Advanced AI features will still require internet connectivity, but the move towards on-device processing signifies a shift in how AI tools are integrated into consumer technology. Apple’s full AI strategy will be unveiled at the WWDC event on June 10.

Be better with AI

In this section, we will provide you with comprehensive tutorials, practical tips, ingenious tricks, and insightful strategies for effectively employing a diverse range of AI tools.

Generative AI for Beginners

In this guide, you will discover how to utilize Parler-TTS, a complimentary text-to-speech engine that enables you to transform written text into spoken words swiftly.

Accessing Parler-TTS:

You can find Parler-TTS on the Hugging Face website.

Explore the interface to get familiar with its features.

Locating Input Fields:

Once you’re on the Parler-TTS page, look for the input fields.

These fields allow you to provide your text prompt and specify voice characteristics.

Writing an Effective Text Prompt:

Craft your text prompt carefully. Consider including phrases like “very clear audio” to guide the TTS model.

Use punctuation appropriately to enhance the naturalness of the generated speech.

Specify desired features such as gender, speaking rate, and pitch in your prompt.

Generating Audio:

After entering your text prompt and adjusting the voice features, click on the “Generate Audio” button.

The TTS model will initiate the speech generation process.

You can then listen to or download the generated audio.

We hope you enjoy this newsletter!

Please feel free to share it with your friends and colleagues and follow me on socials.

The Adobe Premier change is super cool.