NeuralByte's weekly AI rundown - 17th March

Announcement of the most anticipated open-source model!

Greetings fellow AI enthusiasts!

This week was full of exciting news. Llama 3 was announced and will most likely become the best open-source model. The thing I enjoyed most was playing with the new music generation tool Udio. I encourage you to try it too. It’s in beta and it’s free for now. Post your awesome song in the comments.

Dear subscribers,

Thanks for reading my newsletter and supporting my work. I have more AI content to share with you soon. Everything is free for now, but if you like my work, please consider becoming a paid subscriber. This will help me create more and better content for you.

Now, let's dive into the AI rundown to keep you in the loop on the latest happenings:

📅 Inside Big Tech’s AI Data Acquisition Race

🦙 Meta’s Llama 3: The Next Leap in AI

💻 Semiconductor Resurgence in the U.S.

🆕 Meta’s Next-Gen AI Infrastructure

🕵️ More Agents, More Power

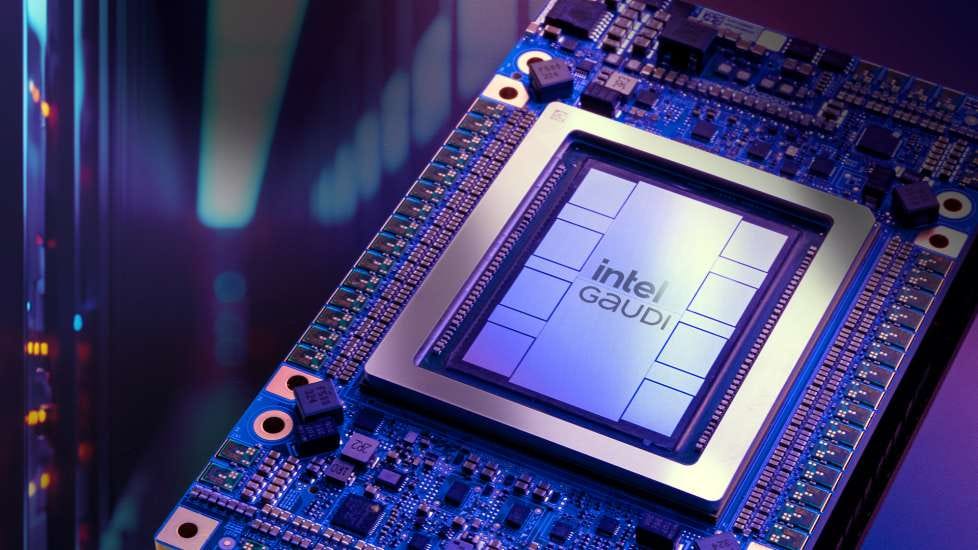

👾 Intel’s Leap into Generative AI with Gaudi 3

🤖 Tesla’s Upcoming Robotaxi

🧠 Cohere’s Command R+ Achieves Top 6 in Arena

🆕 Mistral AI’s Mixtral 8x22B

🍎 Apple’s M4 Chip Revolution

🧑💻 Stable LM 2 Series Expansion

🌉 Bridging the Gap Between Humans and Sensor Data

🚧 OpenAI’s Internal Turmoil and AI Development Concerns

🎶 Udio: The New AI Music Sensation

And more!

Inside Big Tech’s AI Data Acquisition Race

The generative AI revolution is breathing new life into old internet platforms like Photobucket, once a leading image-hosting site. As tech giants and startups alike scramble to secure data for training AI models, a hidden market for content such as personal photos and chat logs is emerging. This market is driven by the need for ‘ethically sourced’ content that can’t be freely scraped from the web, leading to deals worth millions with content owners.

The details:

Photobucket’s Revival: The company is negotiating to license its 13 billion photos and videos for AI training, with rates ranging from 5 cents to $1 per photo and more than $1 per video.

Legal and Ethical Concerns: Tech companies face lawsuits and regulatory scrutiny over using web-scraped data for AI training, prompting them to seek content behind paywalls and login screens.

Generative Data Gold Rush: The rush for private collections of data has led to a burgeoning industry of data brokers and dedicated AI data firms.

Shutterstock’s Big Deals: Shutterstock has struck agreements with major tech companies like Meta, Google, and Apple, initially ranging from $25 million to $50 million each.

User Privacy Risks: Resurrecting archives from platforms like Photobucket for AI training raises privacy concerns, as personal photos could end up in AI outputs without explicit consent.

Why it’s important:

The pursuit of data for AI training is not just a technological endeavor but a strategic business move. As AI models require vast amounts of diverse data to improve, companies are investing heavily in securing this resource. The implications are far-reaching, affecting copyright laws, user privacy, and the very nature of content creation. This trend underscores the importance of ethical considerations in AI development and the potential for new revenue streams for content owners. It also highlights the need for clear regulations to protect individuals’ privacy as their data becomes a commodity in the AI economy.

Meta’s Llama 3: The Next Leap in AI

Meta is poised to unveil Llama 3, its latest large language model, which could revolutionize the AI landscape. With an anticipated early release of a smaller version next week and a full open-source model set for July, Llama 3 is expected to rival giants like Claude 3 and GPT-4. Meta’s investment in cutting-edge AI development is evident in their procurement of Nvidia’s H100 GPUs, underscoring their commitment to advancing AI capabilities. Llama 3 promises a spectrum of models, from compact versions competing with Claude Haiku to robust ones akin to GPT-4, all while being multimodal and less restrictive than its predecessor. This strategic move not only fuels excitement within the tech community but also caters to businesses seeking cost-effective, fine-tunable AI solutions.

Semiconductor Resurgence in the U.S.

The semiconductor industry, once a cornerstone of American innovation, experienced a decline, with domestic production plummeting from 40% to just over 10% of the world’s capacity. Recognizing the economic and national security risks, the CHIPS and Science Act has been a game-changer, revitalizing semiconductor manufacturing and employment. A landmark preliminary agreement with Taiwan Semiconductor Manufacturing Company (TSMC) promises the construction of cutting-edge facilities in the United States, including a third chip factory in Phoenix, Arizona. This $65 billion investment is set to create over 25,000 direct jobs and numerous indirect ones, with an aim to elevate U.S. production to 20% of the global leading-edge semiconductors by 2030. Moreover, $50 million from the CHIPS fund is allocated for local workforce training, ensuring that high-quality jobs remain accessible without the need to relocate.

Meta’s Next-Gen AI Infrastructure

Meta is advancing its AI infrastructure with the next generation of the Meta Training and Inference Accelerator (MTIA), designed to support sophisticated AI models and services. This new chip system is part of Meta’s full-stack development program, focusing on custom silicon tailored to Meta’s unique workloads.

The details:

MTIA v1: Meta’s first-generation AI inference accelerator, designed for deep learning recommendation models across Meta products.

Next-Gen MTIA: Offers more than double the compute and memory bandwidth, optimized for ranking and recommendation models.

Efficient Architecture: The chip features an 8x8 grid of processing elements, boosting dense and sparse compute performance significantly.

Full-Stack Co-Design: Meta’s approach includes co-designing hardware, software, and silicon for optimal inference solutions.

Software Integration: The MTIA stack integrates with PyTorch 2.0, enhancing developer efficiency and supporting a wide range of PyTorch operators.

Why it’s important:

Meta’s investment in custom silicon infrastructure is crucial for handling the increasing complexity of AI workloads. The next-gen MTIA chip not only improves performance but also efficiency, enabling Meta to provide high-quality recommendations and experiences for users worldwide. This strategic move positions Meta to accommodate future AI advancements and maintain a competitive edge in the tech industry.

More Agents, More Power

Researchers have discovered a straightforward method to improve the performance of large language models (LLMs) by using a sampling-and-voting technique that scales with the number of agents. This method is compatible with existing complex methods, offering further enhancements without additional intricate designs.

The details:

Scaling with Simplicity: The study reveals that increasing the number of LLM agents through a simple sampling-and-voting method can significantly enhance performance across various tasks.

Compatibility and Enhancement: This approach is orthogonal to existing methods, meaning it can be combined with them to achieve even better results.

Task Difficulty Correlation: The effectiveness of the method correlates with the difficulty of the tasks, with more complex tasks showing more significant improvements.

Optimization Strategies: Based on the observed properties, the researchers propose optimization strategies that can trigger the power of "More Agents."

Public Code Availability: The code for this method is publicly available, allowing for broader experimentation and application.

Why it’s important:

The ability to scale LLM performance simply by increasing the number of agents is a game-changer for AI enthusiasts and business owners alike. It means that complex tasks can be tackled more effectively without the need for intricate and costly methods. For businesses, this translates to more efficient data processing and decision-making capabilities. The study’s findings are particularly relevant as they offer a cost-effective way to leverage the growing capabilities of AI, ensuring that the technology remains accessible and practical for a wide range of applications. The public availability of the code also encourages innovation and collaboration in the AI community, fostering advancements that could shape the future of technology.

Intel’s Leap into Generative AI with Gaudi 3

Intel has unveiled the Gaudi 3 AI accelerator at the Intel Vision event, marking a significant advancement in enterprise generative AI. This new technology promises to address the challenges businesses face in scaling AI initiatives, offering performance, openness, and choice.

The details:

Gaudi 3 AI Accelerator: Offers up to 4x more AI compute for BF16 and a 1.5x increase in memory bandwidth over its predecessor, enabling a significant leap in AI training and inference.

Performance Edge: Compared to Nvidia H100, Gaudi 3 delivers 50% faster time-to-train on average across various models, and outperforms in inference throughput and power-efficiency.

Open, Scalable Systems: Intel emphasizes open community-based software and industry-standard Ethernet networking, allowing enterprises to scale from single nodes to mega-clusters.

Strategic Collaborations: Partnerships with Dell, HPE, Lenovo, and Supermicro, and collaborations with Google Cloud, Thales, and Cohesity to leverage confidential computing capabilities.

Open Platform Initiative: Intel’s intention to create an open platform for enterprise AI, in collaboration with industry leaders, to facilitate ease-of-deployment and accelerate GenAI adoption.

Why it’s important:

Intel’s Gaudi 3 AI accelerator is a game-changer for enterprises looking to scale their generative AI projects from pilot to production. It addresses key issues such as complexity, fragmentation, data security, and compliance requirements. With its promise of enhanced performance and open, scalable systems, Intel is positioning itself at the forefront of the AI revolution, enabling businesses to unlock new value and innovation without compromising on security or performance. This strategic move not only showcases Intel’s commitment to advancing AI technology but also its dedication to fostering an open ecosystem that benefits the entire industry.

Tesla’s Upcoming Robotaxi

Tesla is set to unveil its long-awaited robotaxi on August 8, as announced by CEO Elon Musk. Despite previous promises and ambitious targets for self-driving technology, the company has yet to deliver a fully autonomous vehicle. This announcement comes amidst investor concerns over slowing growth and follows a period of lower-than-expected vehicle deliveries. Meanwhile, competitors like Waymo and Didi are advancing in the autonomous vehicle space, with Waymo expanding operations and partnering with Uber Eats for delivery services. As Tesla prepares for its reveal, the industry watches closely, remembering past delays in product launches, such as the Tesla Semi, which took years from unveiling to delivery.

Cohere’s Command R+ Achieves Top 6 in Arena

Cohere’s Command R+ Achieves New Heights Cohere’s Command R+ has ascended to the 6th position on the AI Arena leaderboard, garnering over 13,000 human votes and matching the performance level of GPT-4-0314. This marks Command R+ as the top open model currently leading the pack, showcasing Cohere’s significant contributions to the open AI community. Additional updates include Qwen1.5-32B-Chat’s approach to the top-10 and Starling-7B-Beta’s continued dominance as the best 7B model.

Mistral AI’s Mixtral 8x22B

French AI startup Mistral has unveiled Mixtral 8x22B, a cutting-edge large language model (LLM) poised to surpass its predecessor, Mixtral 8x7B, and rival models from OpenAI and Meta. With a staggering 176 billion parameters and a 65,000-token context window, Mixtral 8x22B is designed for a wide array of tasks, available for public use via an open-source approach.

The details:

Impressive Capabilities: Mixtral 8x22B’s 65,000-token context window and 176 billion parameters set a new standard for LLMs, potentially outperforming established models like GPT-3.5 and Llama 2.

Open-Source Accessibility: Mistral’s commitment to open-source allows anyone to download and utilize Mixtral 8x22B, fostering innovation and collaboration in the AI community.

Pioneering Technology: As a frontier model, Mixtral 8x22B embodies the spirit of the Wild West, aiming to outduel predecessors with more advanced and versatile technology.

Regulatory Challenges: The release of such powerful models raises concerns about misuse and the need for industry self-regulation and government intervention.

Criticism and Risks: Mistral’s open-source policy faces scrutiny for the potential misuse of its AI models and the difficulty in addressing flaws or biases that may arise.

Why it’s important:

The advent of Mixtral 8x22B marks a significant milestone in the AI industry, offering unprecedented capabilities that can revolutionize how we interact with technology. Its open-source nature democratizes access to advanced AI, encouraging a broader range of applications and innovations. However, the potential for misuse and the challenges of regulating such powerful technology underscore the need for careful consideration and responsible deployment. As AI continues to evolve, it is crucial to balance the benefits of these frontier models with the ethical and safety concerns they present. Mistral AI’s latest release is not just a technological achievement; it is a catalyst for ongoing discussions about the future of AI and its impact on society.

Apple’s M4 Chip Revolution

Apple Inc. is set to revitalize its Mac lineup with the introduction of the M4 chip, a cutting-edge processor that emphasizes artificial intelligence capabilities. Slated to replace the M3 chips introduced just five months prior, the M4 is expected to come in at least three variants, powering a rapid transition across all Mac models. This strategic move aims to reinvigorate Apple’s computer sales by leveraging the M4’s advanced AI functionalities and memory enhancements. The company’s swift progression to the M4 chip underscores its commitment to innovation and its foresight in the ever-evolving tech landscape.

Stable LM 2 Series Expansion

The Stable LM 2 series introduces a 12 billion parameter base model and an instruction-tuned variant, trained on 2 trillion tokens across seven languages. These models offer a balance of performance, efficiency, and speed, with the 12B model excelling in multilingual tasks and the instruction-tuned version enhancing tool usage and function calling.

Performance Comparisons and Commercial Use Stable LM 2 12B is compared with other leading language models, showcasing its solid performance in zero-shot and few-shot tasks. It is now available for commercial and non-commercial purposes with a Stability AI Membership, promising to empower developers and businesses in AI language technology.

Bridging the Gap Between Humans and Sensor Data

Archetype AI is revolutionizing the way we interact with the physical world by translating complex sensor data into plain language. This startup’s AI models act as a liaison, enabling people to understand what’s happening in their surroundings, whether it’s within a building, vehicle, or even the human body.

The details:

Newton Model: Archetype’s foundational AI model, Newton, processes data from various sensors, providing insights through questions, charts, or code.

Real-World Application: The technology has vast potential, from monitoring fragile cargo to ensuring vaccine shipments maintain their effectiveness.

User-Friendly Interface: Newton allows users to converse with their environment, like a house or factory, in simple language, eliminating the need for complex dashboards.

Investor Interest: Companies like Amazon and Volkswagen are backing Archetype, seeing the value in optimizing logistics and enhancing customer experiences.

Healthcare Potential: Archetype is also exploring healthcare applications, such as aiding in recovery assessments after surgeries.

Why it’s important:

Archetype’s AI represents a significant leap in making sensor data accessible and actionable for everyday use. By simplifying the interpretation of complex data, it empowers individuals and businesses to make informed decisions and address real-world problems efficiently. This innovation not only streamlines operations across various industries but also has the potential to transform our interaction with technology, making it more intuitive and integrated into our daily lives. Archetype AI’s approach could pave the way for a future where AI and sensor technology work hand in hand to enhance our understanding and management of the physical world around us.

OpenAI’s Internal Turmoil and AI Development Concerns

OpenAI, an AI research lab known for its generative AI bot ChatGPT, has recently dismissed two key researchers, Leopold Aschenbrenner and Pavel Izmailov, over alleged information leaks. The organization, which once prioritized humanity’s welfare over investors, shifted to a for-profit model in 2019 and has faced internal conflicts, particularly regarding the pace of AI development. These tensions peaked with the temporary dismissal of CEO Sam Altman and the sidelining of Chief Scientist Ilya Sutskever, who had expressed concerns about AI’s rapid progress and its potential societal impacts. Amidst these challenges, OpenAI continues to advance in the AI field, sparking both excitement and caution among AI enthusiasts and business leaders.

Udio: The New AI Music Sensation

Udio, the latest artificial intelligence music tool, has emerged from stealth mode, capturing the music community’s attention with its remarkable ability to produce emotionally resonant synthetic vocals. Developed by ex-Google DeepMind engineers, Udio has garnered investment and interest from notable figures like will.i.am and Common. Its launch was preceded by leaked tracks that sparked curiosity about the AI’s capabilities. After a week of testing, it’s clear that Udio represents a significant moment in AI music, akin to Sora, with superior vocals and a more natural sound.

Be better with AI

In this section, we will provide you with comprehensive tutorials, practical tips, ingenious tricks, and insightful strategies for effectively employing a diverse range of AI tools.

Generative AI for Beginners

A lot of people already know what the Whisper model is. It is a model from OpenAI which can transcribe audio to text. Now you can use it in easy-to-use UI in a web browser.

Access Whisper Web on your browser; it’s free and doesn’t require any registration.

Select your video or audio source: you can input a URL, upload a file, or use a real-time feed.

Initiate the transcription by clicking on the “Transcribe Audio” button.

Obtain the transcript as a plain text file, providing a complete transcription of your video or audio content.

We hope you enjoy this newsletter!

Please feel free to share it with your friends and colleagues and follow me on socials.